For decades, copper cabling has been considered fundamentally limited compared to optical fiber. As data rates climbed from 100 Mbps to 1 Gbps and then to 10 Gbps, the number of copper pairs, cable thickness, power consumption, and signal-processing complexity all increased dramatically. The prevailing assumption became simple: very high data rates require either many copper pairs or fiber.

Yet recent developments challenge that assumption. Achieving 10 Gbps full-duplex over a single copper pair is not only theoretically possible but practically achievable over short distances. The IEEE 802.3ch-2020 standard defines 10GBASE-T1, which delivers 10 Gbps over a maximum distance of 15 meters with up to four in-line connectors—specifically designed for automotive backbone networks, high-resolution ADAS camera links, and industrial automation.

This raises an important question: how can such a narrow physical medium support such extreme throughput?

The answer lies in a combination of advanced modulation schemes, aggressive signal processing, full-duplex echo cancellation, and the exploitation of higher-frequency spectra than traditionally used in Ethernet. Importantly, this is not a single breakthrough, but rather the convergence of techniques that have matured across Ethernet, DSL, and high-speed serial links.

This article explores the engineering foundations behind 10 Gbps over a single copper pair, focusing on physical-layer mechanisms, trade-offs, and real-world constraints. The target audience is engineers familiar with digital communications, Ethernet PHYs, or high-speed signaling.

Copper transmission lines are governed by several well-known impairments that worsen rapidly with frequency and distance:

Skin effect causes signal attenuation to increase approximately with the square root of frequency. At 4 GHz (required for 10GBASE-T1), skin depth in copper is only approximately 1 micrometer, forcing current to the conductor surface and dramatically increasing resistance. This is why cable gauge and conductor quality critically impact 10GBASE-T1 performance; poor conductors cannot support 15 meters at these frequencies.

At several hundred MHz or above, insertion loss becomes severe, especially on thin conductors. Unlike traditional Ethernet, which can use unshielded twisted-pair (UTP) cabling, 10GBASE-T1 requires shielded cabling to maintain signal integrity at multi-GHz frequencies.

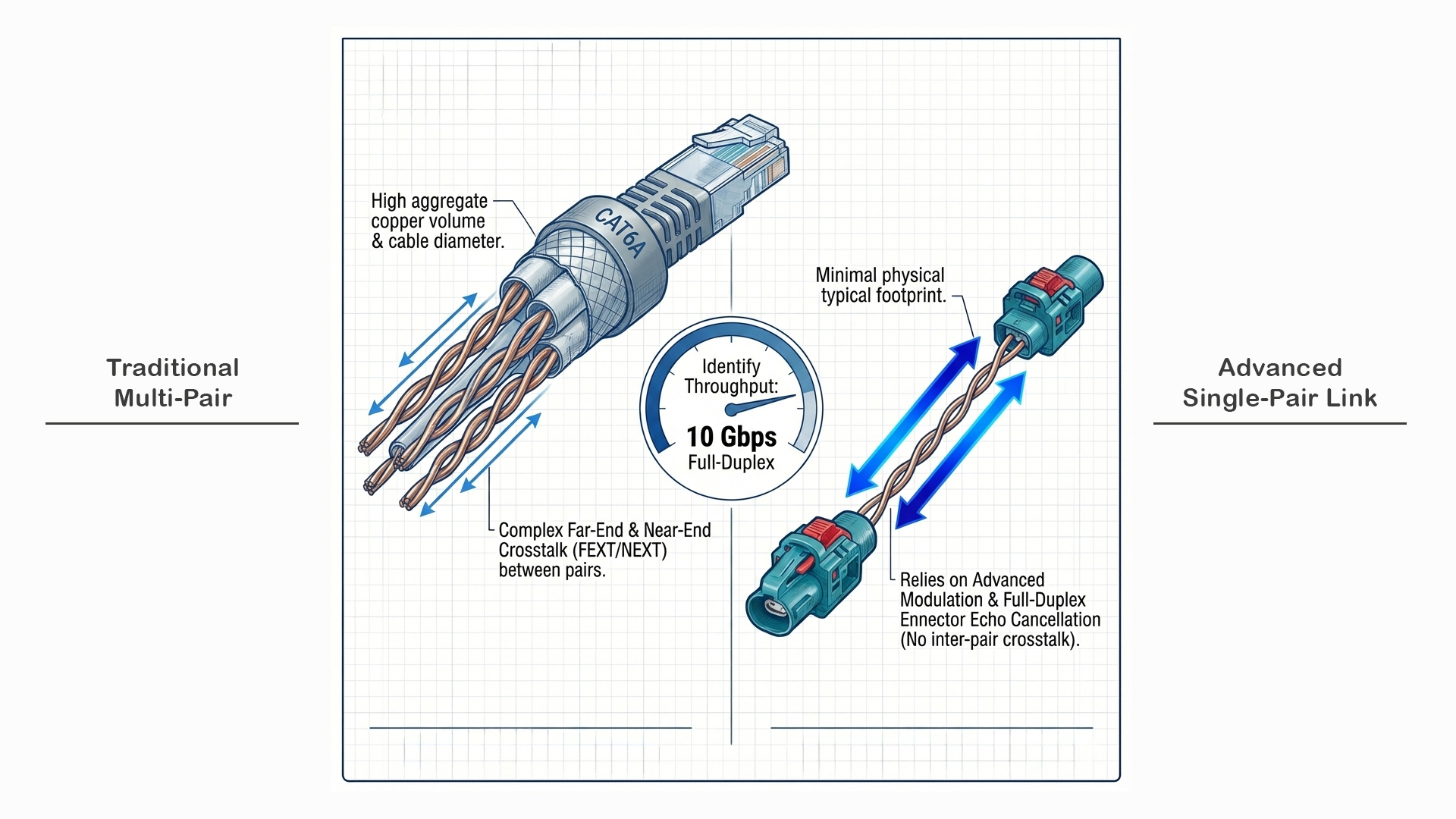

In multi-pair cables like traditional 10GBASE-T, near-end and far-end crosstalk (NEXT/FEXT) between the four twisted pairs dominate system design, compounded by alien crosstalk from neighboring cables in bundles.

Single-pair Ethernet (10GBASE-T1) eliminates internal pair-to-pair crosstalk entirely since only one pair exists—there are no other pairs within the cable to couple with. However, shielded cabling is still required to mitigate alien crosstalk from adjacent cables in automotive harnesses and to protect against external electromagnetic interference (EMI). This is a significant simplification compared to multi-pair systems, but it doesn't eliminate all crosstalk concerns.

10GBASE-T1 requires precisely controlled 100Ω differential impedance. Connectors (up to four allowed per 15-meter link), splices, and PCB transitions introduce impedance discontinuities, causing reflections that distort symbols and reduce eye openings. This is especially critical at multi-GHz frequencies, where even small impedance variations (±5Ω) can cause significant signal-integrity issues.

The standard specifies stringent return loss requirements across the entire 4 GHz bandwidth to ensure reflections remain manageable.

Thermal noise, impulse noise, and external RF coupling all degrade signal-to-noise ratio (SNR). As data rates increase, required SNR margins become increasingly tight. 10GBASE-T1 operates with SNR margins around 20 dB, requiring aggressive equalization and forward error correction to maintain reliable operation.

This is the fundamental engineering trade-off that makes single-pair 10 Gbps both impressive and challenging.

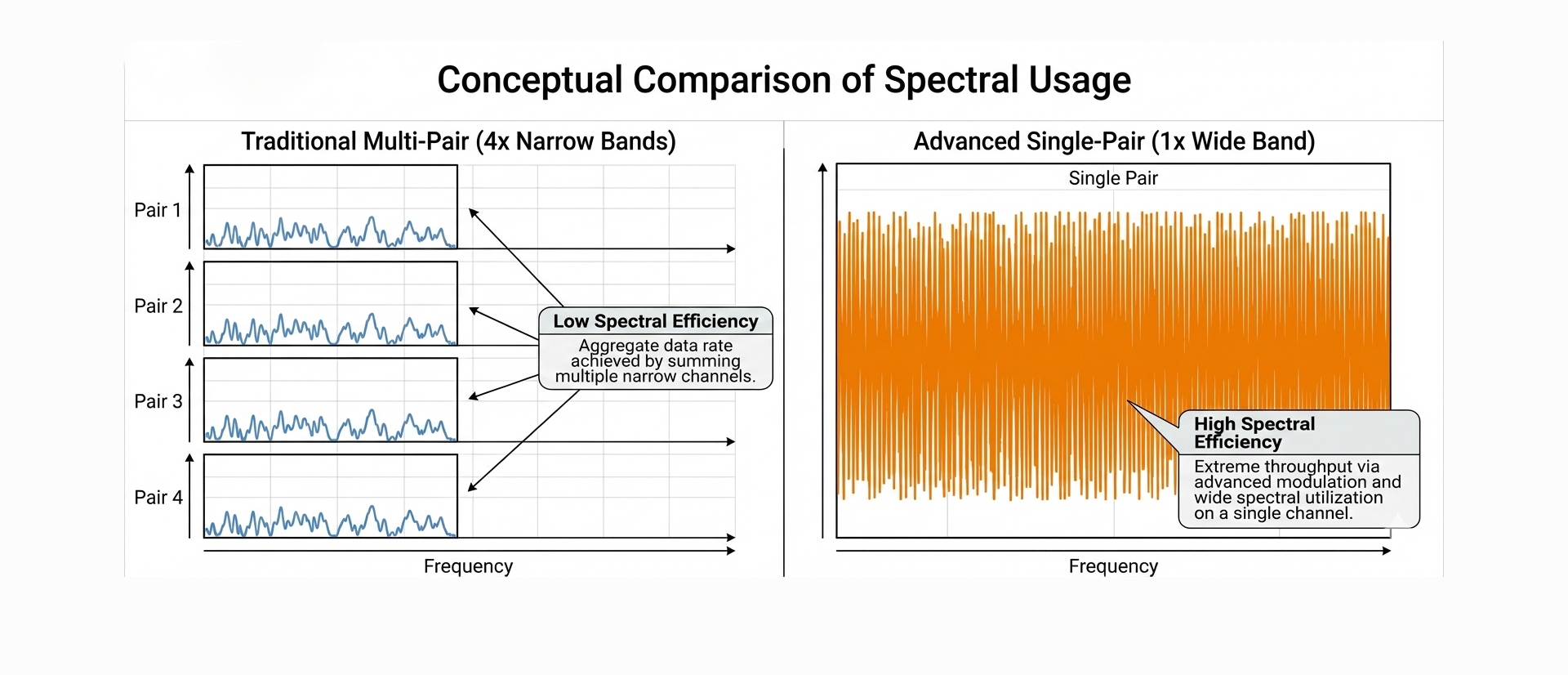

Traditional 10GBASE-T achieves 10 Gbps by splitting the load across four pairs:

Single-pair 10GBASE-T1 must carry all 10 Gbps on one conductor pair:

You might ask: why not use PAM-16 or PAM-32 to pack more bits per symbol and reduce bandwidth requirements?

The answer is noise margin. At 4 GHz over copper:

PAM-4 provides the best balance:

Higher-order modulation (PAM-8, PAM-16) would demand impractically high SNR at these frequencies and distances.

This massive frequency increase (500 MHz → 4 GHz) is only practical because:

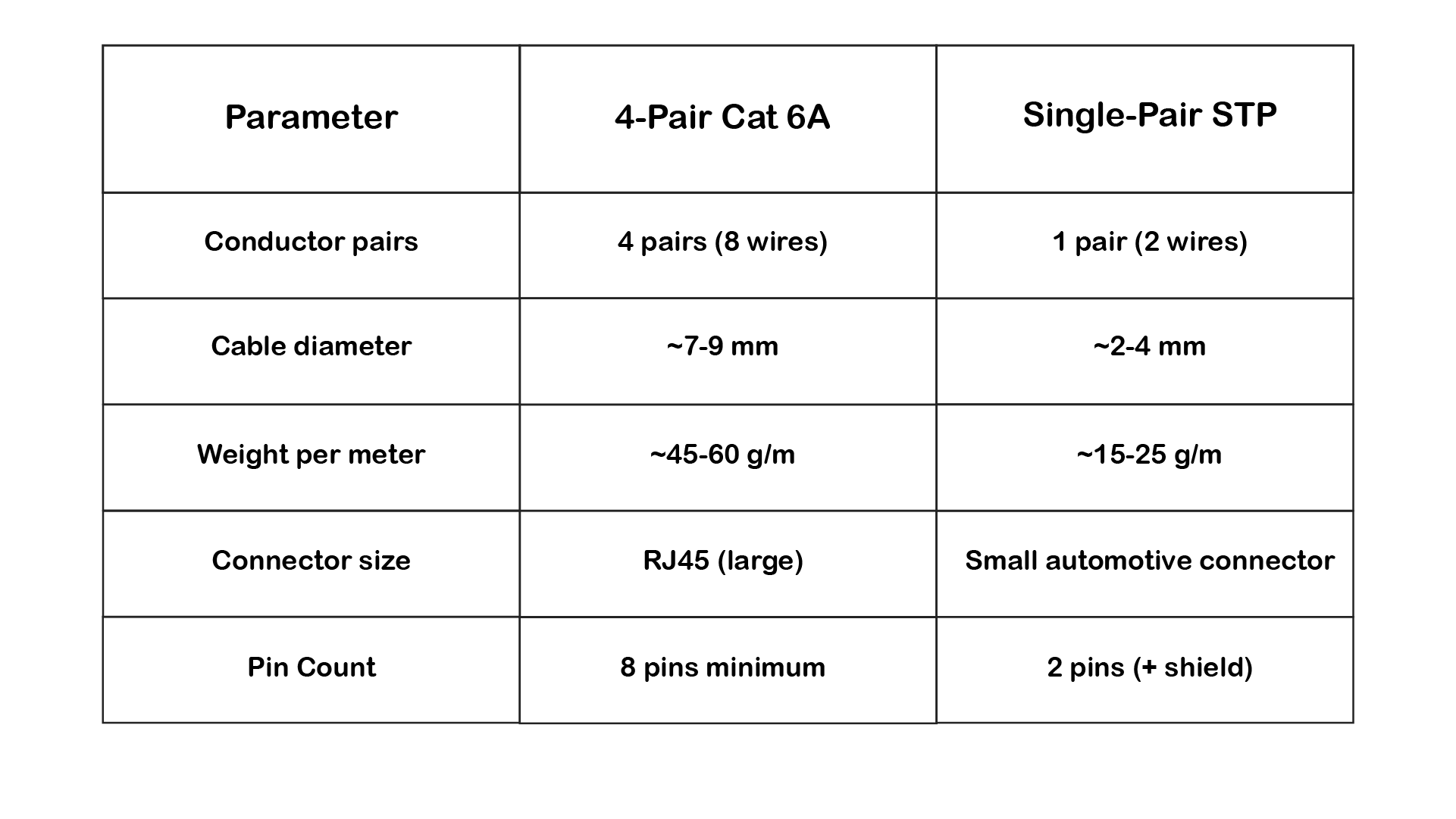

Before diving deeper into the 10 Gbps implementation, it's important to understand why the automotive industry invested heavily in single-pair Ethernet.

Modern vehicles contain kilometers of wiring:

Weight impact:

Single-pair vs. Four-pair comparison:

Example calculation:

Multiply by dozens of high-speed links → significant total savings.

Dashboard, door modules, and zonal ECUs are space-constrained. Large RJ45 connectors don't fit.

Single-pair automotive connectors:

These are 3-5× smaller than RJ45 and designed for:

Counter-intuitively, single-pair can be more expensive per meter due to:

But total system cost is lower:

Modern vehicles are transitioning from domain-based to zonal-based electrical architectures:

Old Domain Architecture:

New Zonal Architecture:

This requires:

Single-pair 10GBASE-T1 is purpose-built for this architecture:

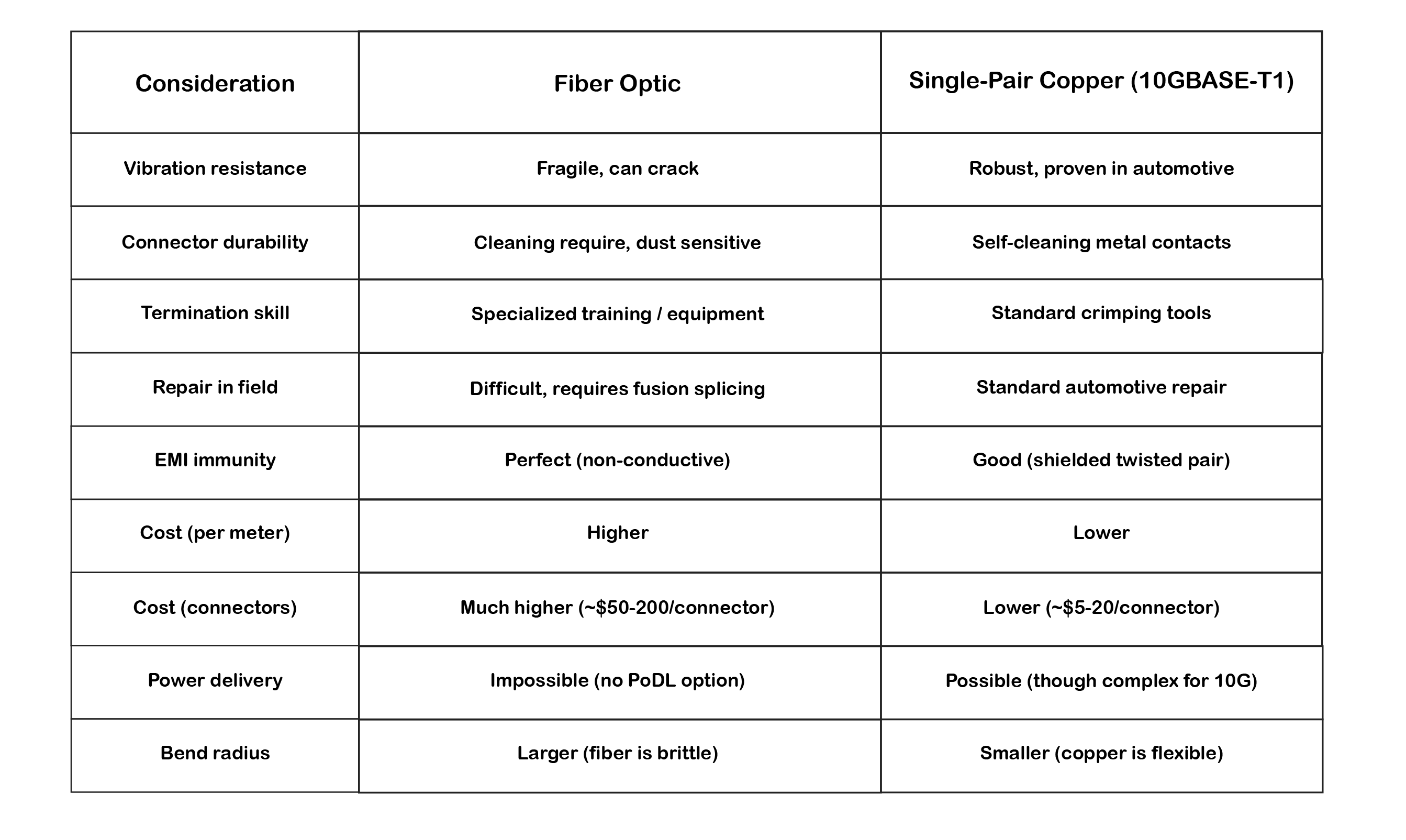

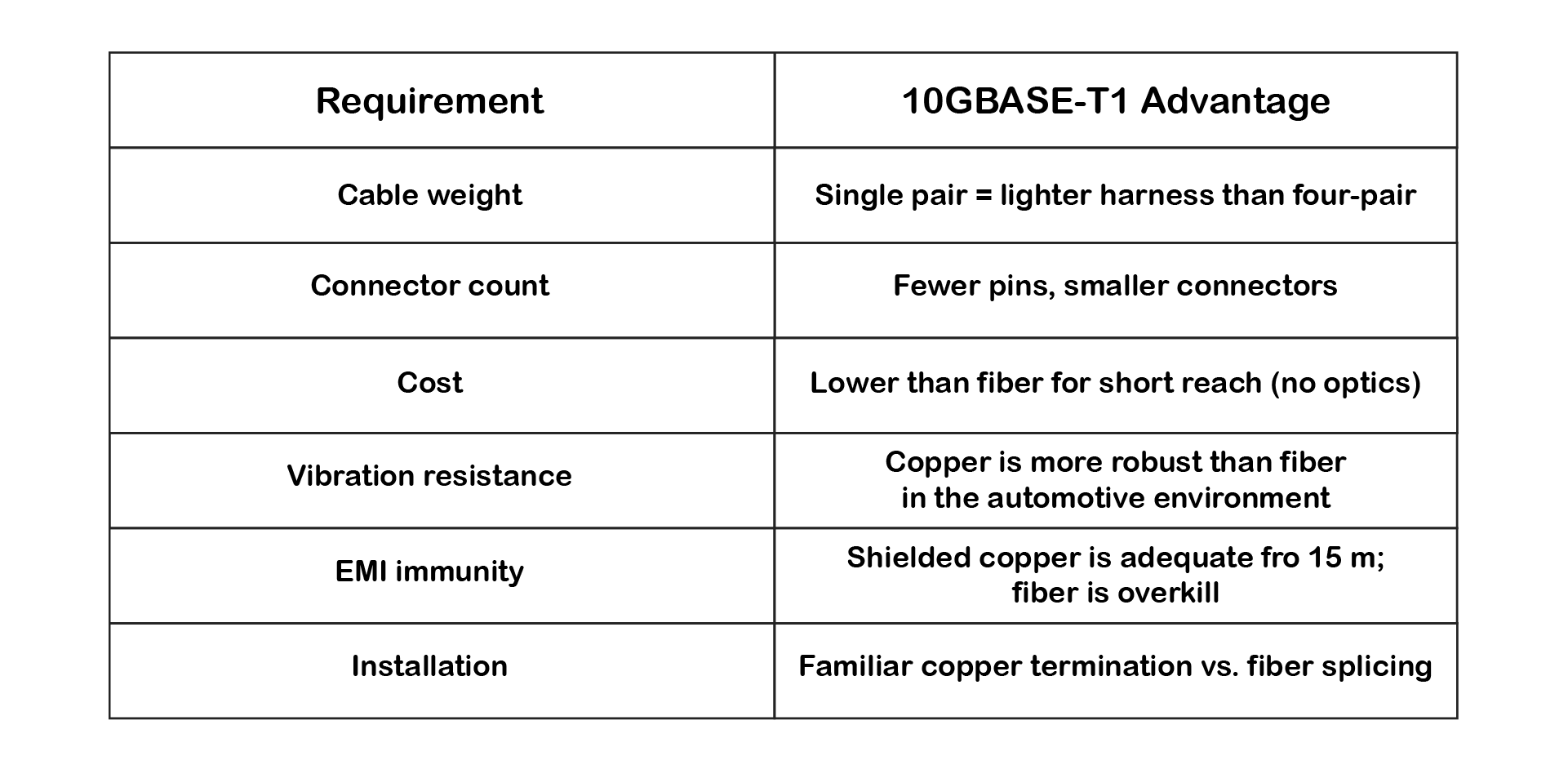

Valid question. Fiber has advantages (no EMI, theoretically unlimited bandwidth), but:

For 15-meter automotive applications, copper wins on:

Fiber makes sense for:

For the bulk of automotive high-speed networking, two-wire copper Ethernet hits the sweet spot.

At its core, data rate is governed by the Shannon-Hartley theorem:

C = B · log₂(1 + SNR)

Where:

For 10GBASE-T1, achieving 10 Gbps capacity over 15 meters requires:

For a single copper pair, increasing capacity requires one or more of the following:

In practice, all three are used simultaneously.

Traditional Ethernet was limited to relatively conservative frequency ranges to ensure robustness and compatibility. 10GBASE-T operates up to 500 MHz across four pairs. Single-pair 10GBASE-T1, however, must compress the entire 10 Gbps onto one pair, requiring bandwidth extension to approximately 4 GHz—eight times higher than multi-pair 10GBASE-T.

This dramatic frequency increase is only feasible over the short 15-meter automotive distances where attenuation remains manageable and sophisticated DSP can compensate for channel impairments.

The journey to 10GBASE-T1 didn't happen overnight. Single-pair Ethernet evolved through several generations, each pushing the frequency and complexity envelope:

But it all started with a vision.

In 2011, something remarkable happened in the automotive world. Volvo Technology and BMW independently came to the same conclusion: Ethernet is the future of in-vehicle networks.

At the time, this was controversial. The automotive industry had spent decades perfecting CAN and FlexRay. Why risk disrupting proven technologies?

The answer was data.

Emerging ADAS systems, high-definition cameras, and the early vision of autonomous driving made it clear: CAN's 1 Mbps and even FlexRay's 10 Mbps would not scale. The industry needed 100× to 1000× more bandwidth, and traditional automotive protocols couldn't deliver.

November 2011: The OPEN Alliance is Born

Industry leaders recognized they couldn't solve this alone. In November 2011, the OPEN Alliance Special Interest Group was formed with a singular mission: speed up the adoption of Ethernet in automotive in-vehicle networks.

The founding members included:

Their goal was ambitious: adapt commercial Ethernet technology for the harsh realities of automotive environments, vibration, temperature extremes, EMI, cost constraints, and weight limitations.

One of the earliest real-world implementations came from Volvo Technology. They embarked on an ambitious project: implementing brake-by-wire over Ethernet.

This wasn't just about data logging or infotainment. This was safety-critical, real-time vehicle control over a network technology the automotive world had never used for such applications.

The challenge:

The team needed partners who understood both automotive requirements and Ethernet technology. This is where companies like the founder's previous company came in, working as members of Volvo's groundbreaking project.

Why this mattered: Brake-by-wire on Ethernet proved that Ethernet wasn't just for infotainment or diagnostics. It could handle the most demanding, safety-critical automotive applications, paving the way for steer-by-wire, throttle-by-wire, and eventually full drive-by-wire systems.

The lessons learned from this project directly influenced:

Armed with these early learnings, the industry embarked on a systematic evolution:

100BASE-T1 (2015 - IEEE 802.3bw):

1000BASE-T1 (2016 - IEEE 802.3bp):

2.5GBASE-T1 / 5GBASE-T1 (2020 - IEEE 802.3ch):

10GBASE-T1 (2020 - IEEE 802.3ch):

Key Pattern: Each speed increase brought:

This shows a systematic evolution in which lessons from 100BASE-T1 and 1000BASE-T1 deployments informed the design of 10GBASE-T1. The automotive industry created a family of compatible two-wire Ethernet technologies, each scaled for different bandwidth requirements.

A key design decision for automotive Single-Pair Ethernet was to standardize on a maximum length of 15 metersfor high-speed variants (1G, 2.5G, 5G, 10G). This wasn't arbitrary, it reflects real automotive architecture:

Typical vehicle cable runs:

Why 15m is the sweet spot:

Over these distances:

Compared to traditional Ethernet distance targets:

Automotive Ethernet inverted the priority: Instead of maximizing distance, it maximized data density (Gbps per wire pair) within the distances that actually matter for vehicles and industrial machines.

This makes high-frequency copper transmission far more realistic than attempting similar speeds over 100-meter office/datacenter distances.

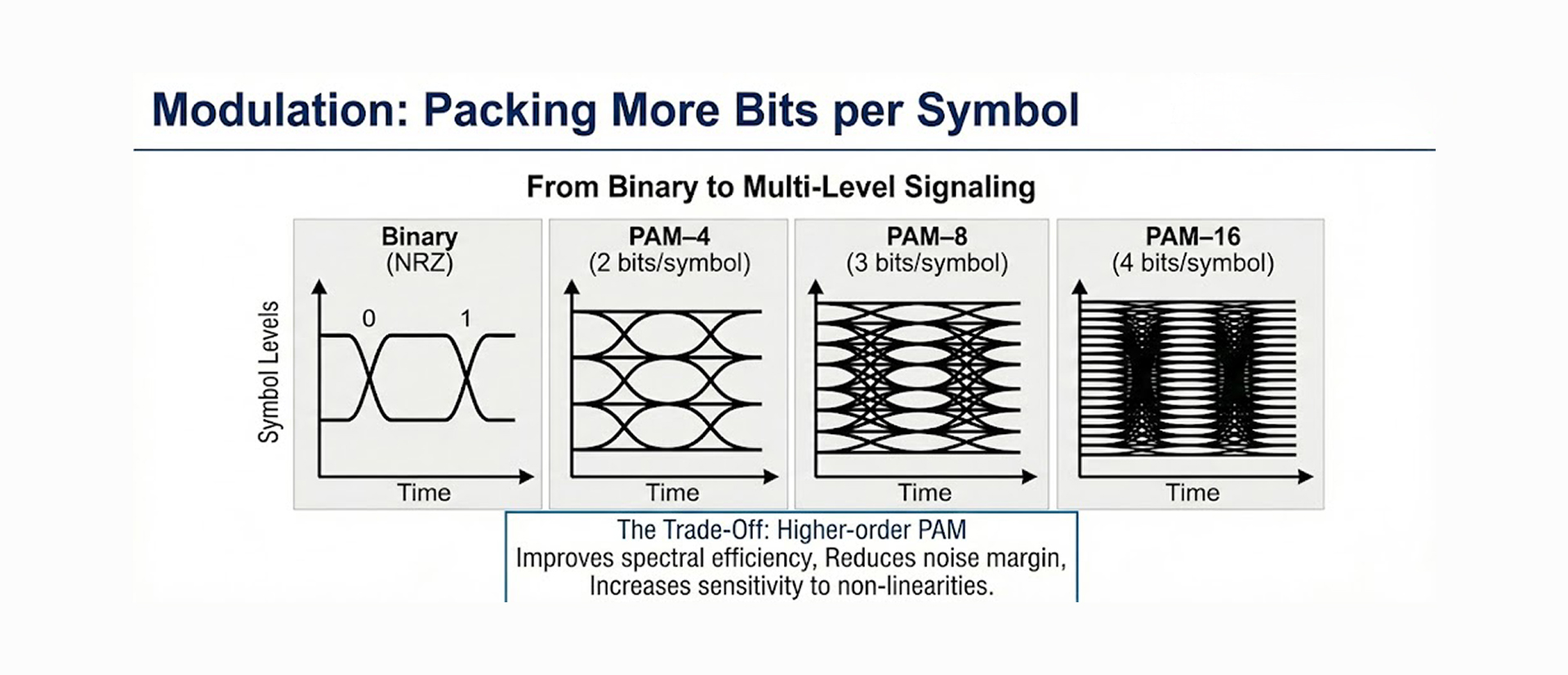

Once bandwidth is extended to 4 GHz, the next lever is modulation efficiency.

Early Ethernet used simple binary signaling (NRZ: Non-Return-to-Zero). Modern systems rely on Pulse Amplitude Modulation (PAM), where each symbol represents multiple bits.

Common PAM schemes:

10GBASE-T1 uses PAM-4 specifically because:

Higher-order PAM:

For 10GBASE-T1, the decision to use PAM-4 rather than PAM-8 or PAM-16 reflects the practical SNR limits at 4 GHz over copper. As a result, advanced DSP is required to reliably recover signals, even with the "conservative" PAM-4 choice

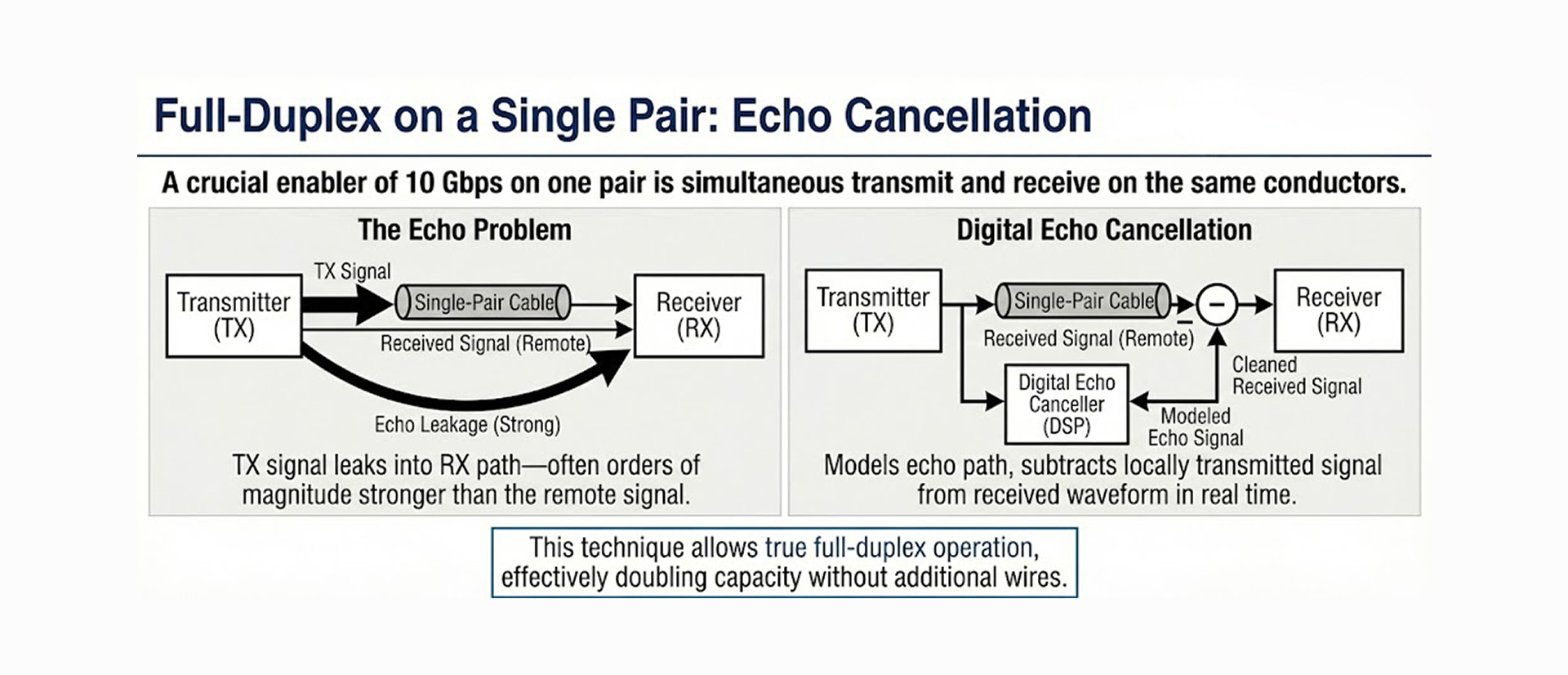

A crucial enabler of 10 Gbps over a single pair is simultaneous transmit and receive on the same conductors—true full-duplex operation.

When transmitting and receiving concurrently on a single pair, the transmitted signal leaks into the receiver path—often 60-80 dB stronger than the remote signal. This "near-end echo" would completely swamp the desired signal without mitigation.

Imagine trying to hear a whisper while shouting into a megaphone—that's the scale of the problem.

Modern PHYs solve this by:

01. Modeling the echo path digitally

- Characterizes how the transmit signal couples into the receiver

- Accounts for hybrid circuit imperfections

- Models reflections from impedance mismatches

02. Continuously subtracting the locally transmitted signal from the received waveform

- Creates a replica of the echo

- Subtracts it in the digital domain with high precision

- Reveals the much weaker remote signal underneath

03. Adapting in real time to temperature, aging, and impedance changes

- Echo path changes with temperature (cable resistance varies)

- Adaptive algorithms continuously update coefficients

- Handles connector variations and aging effects

This technique, pioneered in DSL (Digital Subscriber Line) and perfected in multi-gigabit Ethernet, allows true full-duplex operation, effectively doubling capacity without additional wires.

For 10GBASE-T1:

Without echo cancellation, you would need separate transmit and receive pairs (half-duplex or separate pairs), doubling cable complexity.

At multi-GHz symbol rates, copper channels behave like severe low-pass filters with frequency-dependent phase distortion. At 4 GHz, a 15-meter cable can exhibit 40-50 dB of attenuation, essentially obliterating high-frequency components.

The transmitter intentionally boosts high-frequency components (pre-distortion) so that, after channel attenuation, the received spectrum is approximately flat.

Example:

This is analogous to shouting louder at high pitches so that, after traveling through walls, all pitches are equally audible.

Multiple equalization stages are commonly used in cascade:

01. Continuous-Time Linear Equalizer (CTLE)

- Analog equalizer in the receiver front-end

- Boosts high frequencies before ADC

- Improves ADC dynamic range

02. Feed-Forward Equalizer (FFE)

- Digital FIR filter

- Removes pre-cursor intersymbol interference (ISI)

- Typically 10-30 taps for 10GBASE-T1

03. Decision-Feedback Equalizer (DFE)

- Uses previously detected symbols to cancel post-cursor ISI

- Highly effective but sensitive to error propagation

- Typically 5-15 taps

These work together to:

Modern PHYs continuously adapt equalization coefficients based on channel conditions using algorithms like:

This enables stable operation even on:

The result: A channel that would be completely unusable without equalization becomes capable of reliable 10 Gbps transmission.

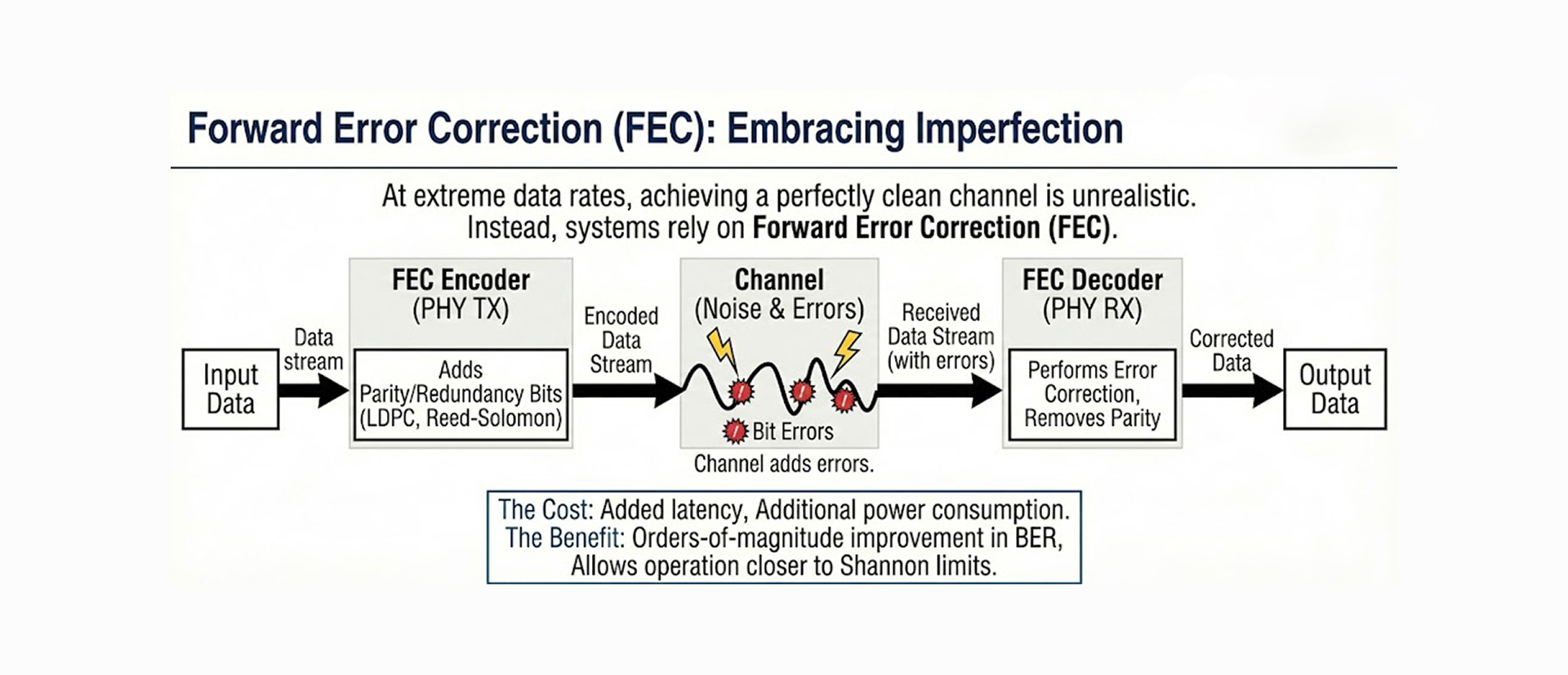

At extreme data rates over challenging channels, achieving a perfectly clean signal is unrealistic. Instead, systems rely on Forward Error Correction (FEC) to tolerate a certain level of residual errors.

Even after aggressive equalization:

FEC allows operation closer to Shannon limits by correcting residual bit errors after detection.

LDPC (Low-Density Parity Check) codes:

RS-FEC (Reed-Solomon):

The cost of FEC:

The benefit:

Practical example:

A less obvious challenge is power consumption and heat dissipation.

High-speed ADCs, DACs, and DSP blocks required for 10GBASE-T1 consume significant power:

Total PHY power consumption: Typically 2-4 watts per port

This generates substantial heat that must be managed, especially in:

Thermal management strategies:

Power over Data Lines (PoDL) is standardized and proven for lower-speed Single Pair Ethernet variants (10BASE-T1L, 100BASE-T1, 1000BASE-T1) under IEEE 802.3bu. However, for 10GBASE-T1, PoDL adoption faces technical and systemic challenges rather than technological immaturity:

Despite challenges, PoDL remains viable and beneficial for specific 10GBASE-T1 applications like Remote ADAS cameras, where running separate power is difficult.

And PoDL is viable in 1000BASE-T1 applications like:

For backbone ECU-to-ECU links, separate power delivery typically remains the pragmatic choice.

Single-pair 10 Gbps is not a universal replacement for fiber or traditional multi-pair Ethernet. It excels in specific scenarios where its unique characteristics provide clear advantages:

Automotive Backbone Networks:

Industrial Automation:

Embedded and Edge Computing:

In environments where cable weight, connector count, harsh-environment resistance, and cost matter more than maximum distance, 10GBASE-T1 offers compelling advantages.

As CMOS process nodes advance and DSP efficiency increases, the feasibility of ultra-high-speed copper links continues to expand. What once required laboratory-grade equipment and expertise is increasingly becoming produceable silicon available to automotive Tier 1 suppliers.

Beyond 10 Gbps on Two Wires:

Lower-speed variants continuing to proliferate:

Integration and power reduction:

IEEE 802.3 ongoing work:

OPEN Alliance (automotive consortium):

Broader ecosystem development:

Zonal architectures and Software-defined vehicles are becoming mainstream:

Sensor fusion requiring aggregation of multi-Gbps streams:

2015-2018: Early adoption (100BASE-T1, 1000BASE-T1)

2019-2022: Volume production (1000BASE-T1 mainstream)

2023-2025: Multi-gigabit transition (2.5G/5G/10GBASE-T1)

2026-2030: Widespread deployment (projected)

The boundary between copper and fiber will continue to blur, especially for short-reach applications where copper's mechanical simplicity, robustness, and familiarity offer compelling advantages over the theoretical superiority of optics.

However, two-wire copper has found its niche:

The question is no longer "Can copper do it?" but rather "Where does two-wire copper make the most economic and engineering sense?"

For automotive and industrial automation within 15 meters, the answer is increasingly clear: two-wire 10GBASE-T1 is the pragmatic choice.

Achieving 10 Gbps on a single copper pair, specifically, 10GBASE-T1 over 15 meters, is not magic. It is the logical outcome of:

For engineers, it represents a fascinating intersection of theory and practice, pushing a legacy medium far beyond its original intent while respecting fundamental physical limits.

Key takeaways:

The automotive industry's adoption of 10GBASE-T1 demonstrates that with sufficient engineering effort and system-level optimization, traditional assumptions about copper's limits can be challenged, at least within well-defined operating envelopes.

As vehicles become data centers on wheels and industrial automation demands real-time multi-gigabit connectivity, 10GBASE-T1 provides a pragmatic engineering solution: extreme performance where you need it, within the constraints of what copper can realistically deliver.

IEEE Standards:

Industry Organizations:

Technical Deep Dives: